Details about CI/CD pipelines are rarely disclosed. Let’s change this. In this article, we show how our pipeline is structured and why a CI/CD pipeline does not replace the local development environment.

Table of Contents

What is a CI/CD pipeline?

For the sake of completeness, we will briefly go into what a CI/CD pipeline is. For those who want to know exactly: We described it in detail in our glossary article “CI/CD”. In a nutshell: The pipeline summarises the individual steps of CI and CD. It consists of jobs (What needs to be done?) and stages (Which jobs should be executed when?) The ideal CI/CD pipeline looks like this:

This ideal pipeline model usually differs from actual CI/CD pipelines. But to what extent? If you look for concrete examples, disappointment quickly sets in. Generic examples are shown, details about one’s own pipeline are hardly ever revealed. That is why we have decided to present our pipeline.

Our CI/CD pipeline

First of all: There are various tools for creating a CI/CD pipeline. One tool that is often used is Jenkins. Our CI/CD pipeline is implemented with our product Cloudomation. You can read about the exact differences between Cloudomation and Jenkins on our comparison page.

Structure of our CI/CD pipeline

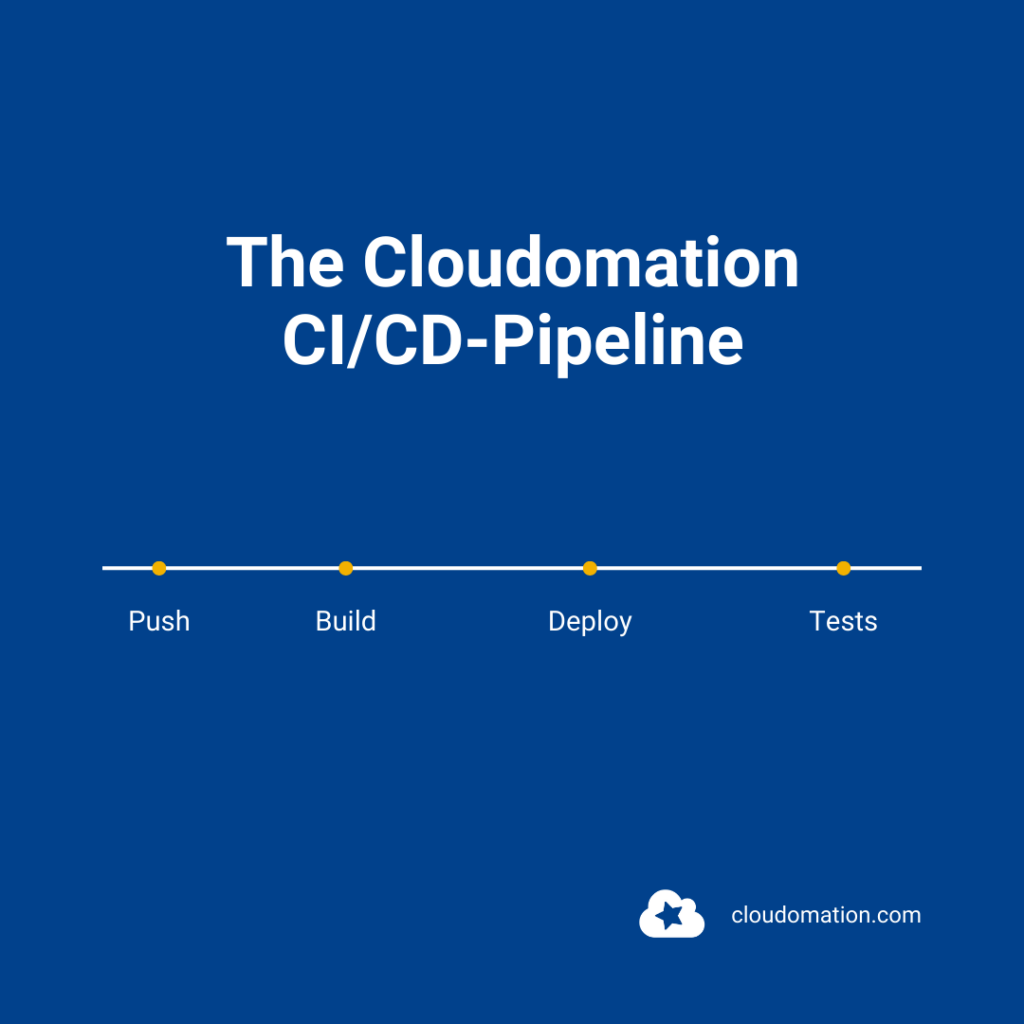

Essentially, the Cloudomation pipeline consists of the following steps:

- Receive push

- Process push

- Process build request

- Process deploy job

- Process integration jobs

Let’s look at the individual phases in detail.

#1 Receive Push

In this step, the pipeline is started via a webhook call from Gitlab. Every time a push is made to one of our repositories, Cloudomation Engine comes into play.

The following is included in this push:

- Who started the push,

- information about all the commits that are in that push.

Then the processing begins.

#2 Process Push

This step is quickly described: The build job is created and processing starts.

#3 Process build request

During processing, there is a branching to specific build flows – depending on the components. The individual build flows then create the build. To do this, these steps are necessary:

- Get sources

- Unittests

- Compilation

- Packaging

- Upload packages to the Artefact storage

- Master data check which systems should be automatically updated with the current component / branch. A deploy job is then created for each system and processing starts.

#4 Process Deploy Job

In this step, integration test jobs and deployments follow. If it is the internal system, an integration test job is created and processing starts. As in the previous step, this is followed by a branch to specific deploy procedures depending on the component. These carry out the actual deployments. These include, for example:

- Stop system

- Install updates

- Starting the system

- Import objects, etc.

Once this update step has been completed, integration test jobs are created again for the internal system. Smoke tests, system tests in the case of a nightly build, load tests and infrastructure tests also follow. Then the processing of the integration test jobs begins.

#5 Process integration test jobs

In this step, the user logs into the system and all integration tests of the selected category are started. Then it is time to wait and collect the results for review.

The latest information about RDE and DevOps directly to your inbox. Become a Cloudomation Insider now!

Why is the pipeline structured this way?

Our pipeline is mapped in our own product. This way we have a complete overview of all steps in one central place. If a step breaks, a link takes us directly to the actual execution and we can debug. Each step can also be restarted manually, for example:

- Build the same push again with new build scripts.

- Deploy the same build again (production / customer system).

- Run tests again or run more tests.

How is our pipeline optimised?

We would like to mention three optimisations here:

Optimisation No. 1: Modularity

Pipelines of different components all run in the same scheme. This means that there are not four different pipelines for four different components. With one central system, different requirements are mapped, e.g. in terms of technology or programming language.

Optimisation no. 2: Avoiding side effects

Developers are often faced with the following problem: There is a build server that has been used for years. People shy away from updates because they are afraid that errors will occur. With Cloudomation, the builds always run on a fresh VM that has just been cloned. Updates are applied automatically. This way the server is always up to date.

Optimisation No. 3: Speed

Once a day, a completely new VM is set up, the corresponding tools, libraries and dependencies are installed, sources are cloned, users are set up, etc. The VM is then stopped and a snapshot is created. This snapshot is then used for the builds. It is updated daily, without legacy and deployed in seconds.

CI/CD pipeline vs. local development environment

Normally, development takes place in local development environments. In these environments, all tools have to be set up, dependencies installed and the software made to run. This is not always so easy, often even a very complex issue. In addition, bug fixes often have to be carried out in local development environments. (By the way: Remote Development Environments are a solution to these problems – read more: What are Remote Development Environments?)

With an automated CI/CD pipeline, the thought could arise that local development environments become obsolete. However, this is a fallacy. CI/CD pipelines are usually triggered with a push. Local development environments are used for all activities that happen before the push. The CI/CD pipeline maps the internal deployment process (staging, testing), but not the local one.

Remote Development Environments vs. Local Development Environments

In this comparison document you will learn in detail what the most important differences are between Cloudomation RDEs and local development environments.

Download nowSummary

The Cloudomation CI/CD pipeline essentially consists of 4 phases: Push, Build, Deploy and Integration Testing. Within the phases, numerous jobs are executed. Depending on the type of build, different build flows are invoked during processing. In the Deploy phase, integration tests follow before the update and then, depending on the component, the correct Deploy procedure. Finally, there are integration tests. With Cloudomation, we can rebuild, deploy or start further tests on the same push at any time. The pipeline is designed for modularity, avoidance of side effects and speed. Also worth to mention: In practice, the use of CI/CD pipelines sometimes leads to a fallacy regarding local development environments: Namely, that these can be replaced by the CI/CD pipeline.

Subscribe to the Cloudomation newsletter

Become a Cloudomation Insider. Always receive new news on “Remote Development Environments” and “DevOps” at the end of the month.